SOURCE: Epp Tool via Freedom Research

For ten years, the European Commission has been organizing censorship campaigns around the world.

The Committee on the Judiciary of the U.S. House of Representatives has published a report “The Foreign Censorship Threat, Part II: Europe’s Decade-Long Campaign to Censor the Internet Globally and Its Harmful Impact on American Freedom of Speech” on how European Union laws, regulations, and court rulings are forcing companies to implement censorship around the world.

The Committee bases its report largely on internal documents from technology companies, minutes of secret meetings, emails, etc., which the companies have submitted in response to subpoenas or the Committee, and it concludes that the European Commission (EC) has been organizing censorship campaigns around the world for at least ten years. According to it, the EC has successfully pressured major social media platforms to change their content moderation rules, including by removing “inappropriate” content. According to the U.S. House Judiciary Committee, the European Commission often considers truth and political speech to be “inappropriate” content and refers to its actions as a fight against “hate speech” and “disinformation.” This is particularly striking in the context of some of the most important political debates of recent years, such as the COVID-19 pandemic, immigration, and transgender ideology. In doing so, the EC pays disproportionate attention to conservative content and interferes in elections in both Europe and the US.

European Censorship Began to Sprout 10 Years Ago

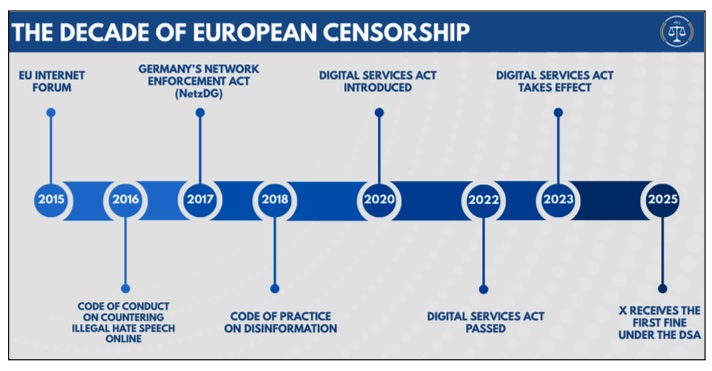

The Committee estimates that European censorship campaigns began in the mid-2010s, when social media became increasingly important in political debate. From the outset, senior EU leaders envisioned a sweeping digital censorship law that would give the European Commission complete control over online discourse. “Hate speech” and “misinformation” became labels of sorts that European agencies used against political discourse they disagreed with or perceived as threatening to their power. Around the same time, European concerns about so-called hate speech were fuelled by the wave of immigration that swept across the continent, sparking new political debates about multiculturalism, assimilation, and the threat of terrorism.

According to the Committee on the Judiciary, the first significant milestone in the fight against hate speech and misinformation was in 2015, when the Commission established the European Internet Forum (EUIF). The primary goal of the forum is to “address the misuse of the internet for terrorism,” but in order to achieve this goal, it has begun to “encourage” platforms to censor legitimate political speech. Thus, by 2023, the European Internet Forum had completed a handbook (related document) that provides technology companies with “recommendations” on how to moderate legitimate, non-violent speech, such as populist rhetoric, political satire, anti-government/anti-EU, anti-elite, anti-immigration, anti-LGBTIQ content, and Islamophobic content, as well as meme subculture. In other words, the EUIF advises platforms on how best to censor so-called borderline content.

Efforts to monitor and moderate European web content continued in the following years. In 2016, the EC adopted a Code of Conduct on Countering Illegal Hate Speech Online, and in 2018, a Code of Practice on Disinformation. According to EC officials, both codes were voluntary for platforms. At the same time, platforms knew that they would have to comply with EU censorship requirements eventually, for otherwise they were to face heavy fines. Therefore, it was less painful to start complying immediately.

Facebook, Instagram, TikTok, and Twitter promised to implement the hate speech code and censor hate speech, which was defined rather vaguely in the code of conduct. In January last year, the European Commission decided to incorporate the hate speech code into the Digital Services Act (DSA), which is mandatory for large online platforms. This means that the hate speech guidelines are now mandatory as well. The platforms also began to follow the disinformation code, which requires large online platforms to reduce the visibility and spread of information considered to be disinformation. From 2022, the code of practice included an obligation for platforms to participate in working groups where online platforms, civil society organizations (CSOs), and European Commission agencies discuss how to censor so-called disinformation. At the working group meetings, the EC pressured platforms to change their content moderation rules and implement additional content censorship measures. Fact-checking, elections, and the demonetization of conservative news outlets were also discussed, and a “consensus” was reached under strong pressure from the EC.